Every website should have a sitemap. It’s the fastest way for search engines and AI crawlers to discover every page you want indexed, and it’s the first thing I check when analyzing a competitor’s site.

This guide covers four ways to find any website’s XML sitemap, plus what to do once you have it.

What is a sitemap?

An XML sitemap is a file that lists every page on a website the owner wants search engines to discover. It usually includes each URL’s last-modified date, and sometimes priority and change frequency signals, though Google has confirmed it ignores the priority and changefreq fields in practice.

Every modern CMS generates one automatically: WordPress (via Yoast, Rank Math, or WP core), Shopify, Webflow, Squarespace all output a sitemap by default. The question is usually not “does this site have a sitemap” but “where is it.” That’s what the methods below are for.

Quick clarification: when people say “sitemap” they can mean one of two things. The XML sitemap is the machine-readable file that search engines read. The HTML sitemap is a human-readable page linking to every post, usually in the footer. The XML version is what matters for SEO. HTML sitemaps are optional.

Method 1: Try the common URLs

The fastest method, and the one that works most of the time. Add one of these paths to the end of any domain:

/sitemap.xml /sitemap_index.xml /sitemap-index.xml /sitemap /sitemaps.xml /wp-sitemap.xml

WordPress-specific URLs worth trying on any WP site:

- Yoast SEO:

/sitemap_index.xml - Rank Math:

/sitemap_index.xml - WordPress core (no SEO plugin):

/wp-sitemap.xml

Most sitemaps you find this way are “sitemap index” files that link to sub-sitemaps split by content type (posts, pages, products, categories). That’s normal and actually better for large sites.

Method 2: Check the robots.txt file

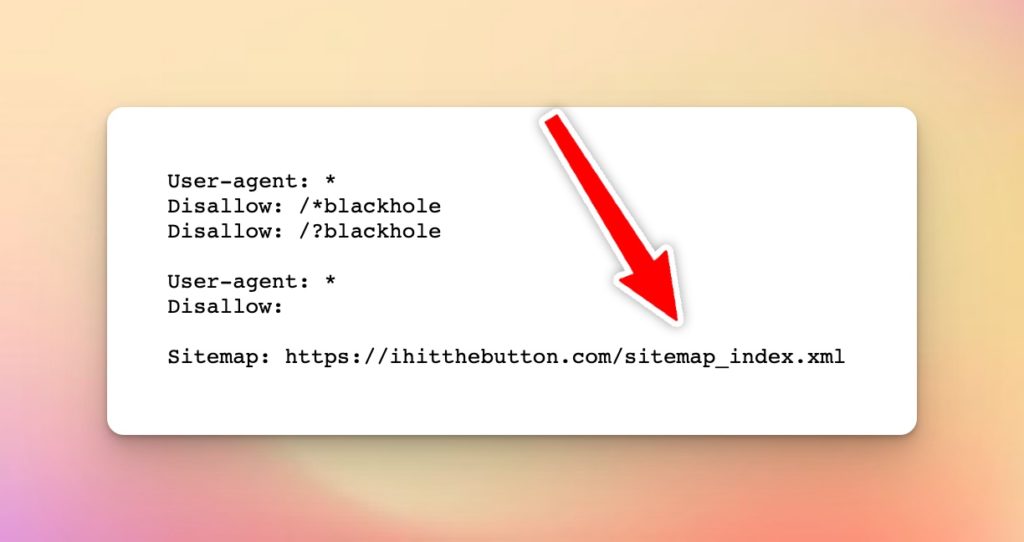

The most reliable method. Every well-configured site lists its sitemap in robots.txt, because that’s where Google and other crawlers look first.

Steps:

- Go to any website

- In the address bar, type

/robots.txtafter the domain (e.g.,example.com/robots.txt) - Look for a line starting with

Sitemap:followed by the full URL

If the site uses multiple sitemaps (news sitemap, image sitemap, video sitemap), each will be listed separately. On large sites you might see 5 to 10 Sitemap: lines. Copy all of them. A competitor’s news sitemap and product sitemap tell you different things than their main content sitemap.

Method 3: Check Search Console (your own site only)

If it’s your own site, open Google Search Console and go to Sitemaps. This shows every sitemap Google knows about for your domain, when it was last read, how many URLs it contains, and any errors Google hit when parsing it.

This is also where you submit new sitemaps and monitor indexation over time. If you’re migrating a site or launching new content clusters, this is the first place to watch.

Method 4: Inspect page source or footer

Slower and less reliable, but works when the site doesn’t expose robots.txt correctly:

- Visit the homepage

- Scroll to the footer and look for a “Sitemap” link. It’s usually an HTML sitemap but may link to the XML

- View the page source (right-click → View Page Source) and search for

sitemapor.xml

Honestly, this method rarely works in 2026. The robots.txt approach finds the sitemap on almost every site.

What to do once you’ve found a sitemap

For your own site:

- Submit it to Google Search Console (Sitemaps section)

- Re-submit it after structural changes or content migrations

- Monitor it weekly for indexation errors

- Confirm every page you want indexed is listed, and none you don’t

For a competitor’s site:

- Count pages by type (product, blog, landing, etc.) to understand their content volume

- Look for surprising URL patterns (pricing pages, free tools, comparison pages) that signal where they’re investing

- Cross-reference their top-ranking pages with what’s in their sitemap

- If you see 10,000+ URLs generated from templates, they’re likely doing programmatic SEO

For auditing a new site you’re about to work on:

- Confirm the sitemap exists at all

- Check for 404s, redirects, or thin content being submitted

- Make sure URL slugs are clean

- Verify noindex pages aren’t being submitted (common WP misconfiguration)

The short version

A sitemap should live at yoursite.com/sitemap.xml or yoursite.com/sitemap_index.xml. If it’s not at either, robots.txt will tell you where it is. Every modern CMS generates one automatically, so the question isn’t whether to have a sitemap. It’s whether you know where yours lives and whether you’ve submitted it to Google.

Leave a Reply